'Safe and Effective' — The AI Moratorium Campaign Is a Trojan Horse for Political Censorship

A movement to crush American flourishing.

The forces that seek to thwart technological progress in America are being framed in the news media as mere advocates for debate, but they’re already pursuing a coordinated political campaign with two distinct authoritarian objectives.

Halting American AI development, particularly on the data center front, is the visible face of the movement right now, and it’s being represented across the nation and in D.C. across the political spectrum. The Artificial Intelligence Data Center Moratorium Act, introduced this spring by Sen. Bernie Sanders and Rep. Alexandria Ocasio-Cortez, sits atop a dense and well-funded activist infrastructure, which often comes in the form of Effective Altruist (EA)-aligned advocacy shops. Dozens of local moratoriums have already passed in counties across the country, and filed statewide bills in twelve legislatures. The billionaire donor-funded machinery behind this movement has produced endless rallies, press conferences, and media coverage about water usage and electricity bills. It’s even infecting the considerably conservative state of Florida through astroturf EA-adjacent groups like Humans First, An AI doomer-funded outfit that falsley portrays itself as a right wing outfit.

The second objective is more direct, but it promises to be “safe and effective.”

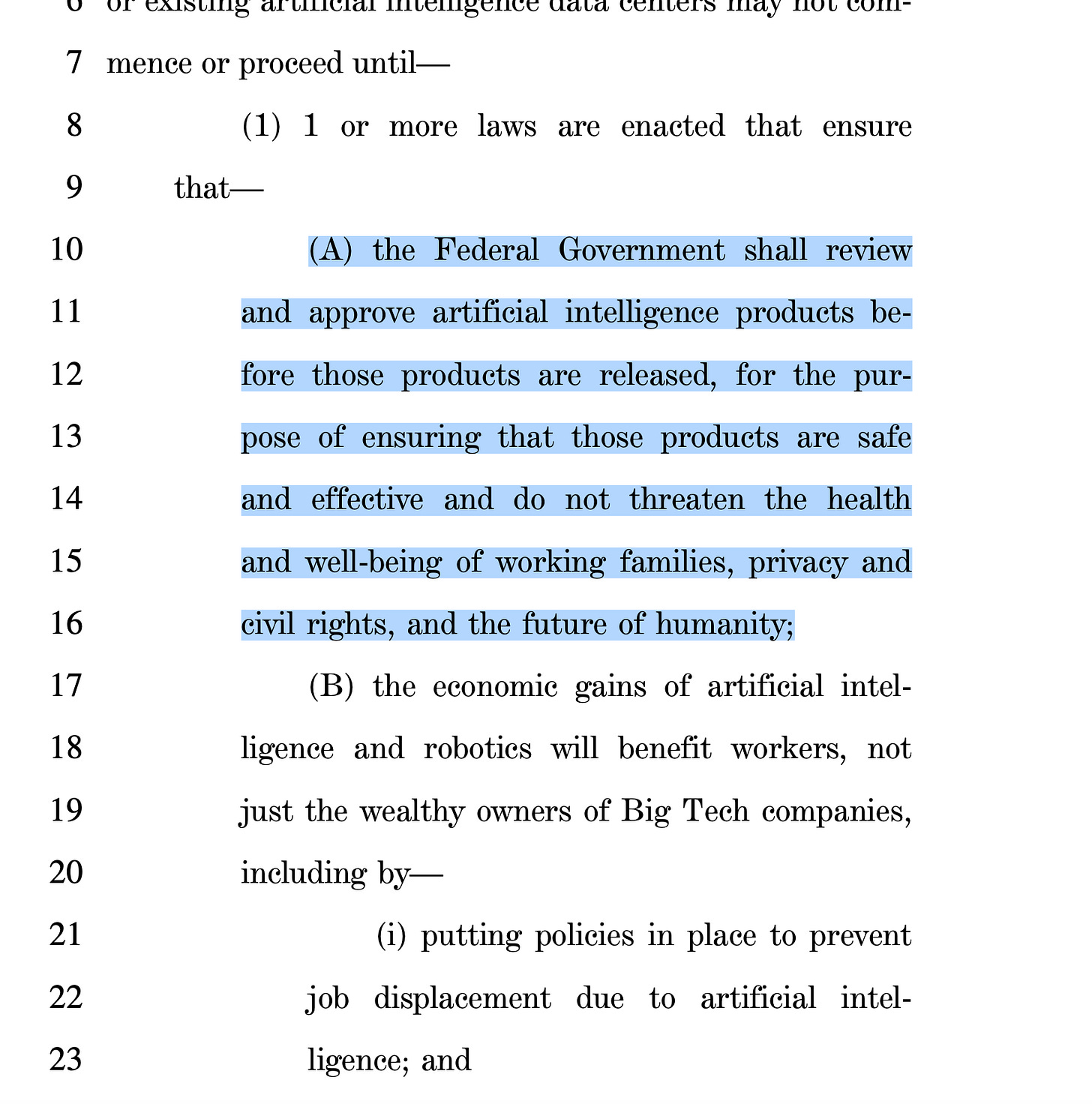

In Section 3 of the Sanders-AOC bill is the real policy ambition for the coalition. The provision explains why many of the same activist groups that spent the last decade advancing the climate narrative have now taken an urgent interest in server racks. The conditions for lifting the proposed moratorium is premised on ensuring that “the Federal Government shall review and approve artificial intelligence products before those products are released, for the purpose of ensuring that those products are safe and effective and do not threaten the health and well-being of working families, privacy and civil rights, and the future of humanity.”

This is not a safety standard or a regulatory disclosure rule. It marks an ambition for federal pre-approval for software, which, taking a page out of the covid playbook, must come with a “safe and effective” stamp of approval.

Every AI product (every model, every feature, every release) would have to pass through a government licensing office before reaching a user. Of course, this would both cripple innovation and ruin the AI software entirely, making it subject to the whims of political bureaucrats. The bill does not specify which agency. It does not define “safe and effective.” It does not bound what constitutes a threat to “the future of humanity,” a standard so elastic it can mean whatever the presiding administration wants it to mean that day.

Once a licensing apparatus exists, the politics of who gets approved and who doesn’t does the rest of the work. An AI producing text Washington D.C. finds “harmful” becomes an AI that threatens “privacy and civil rights” or “working families.” A language learning model giving non-approved answers on the climate narrative, on elections, or on countless social issues does not have to be banned. It simply never gets approved. It amounts to pre-publication review by the executive branch. It is the architecture of a censorship regime. It’s also anti-competitive by design, which is why AI heavyweights like Antrhopic tend to link up with the “pause AI” groups so frequently

.Pre-approval is only one veto point among several. The same section demands laws empowering “communities” to reject data centers, banning all federal, state, and local subsidies for their construction, and mandating union labor under prevailing wage rules at every facility. The bill is not trying to make AI infrastructure safer or beneficial to locals. It is instead trying to make every piece of it permitted, akin to the notoriously doomed California high speed rail project.

Should these efforts succeed, America would adopt a pre-approval regime, then uses hardware export controls to pressure allies and competitors to adopt comparable censorship and regulatory regimes. The result is an anti-human authoritarian system, one that conveniently resembles the system the Chinese Communist Party already operates domestically.

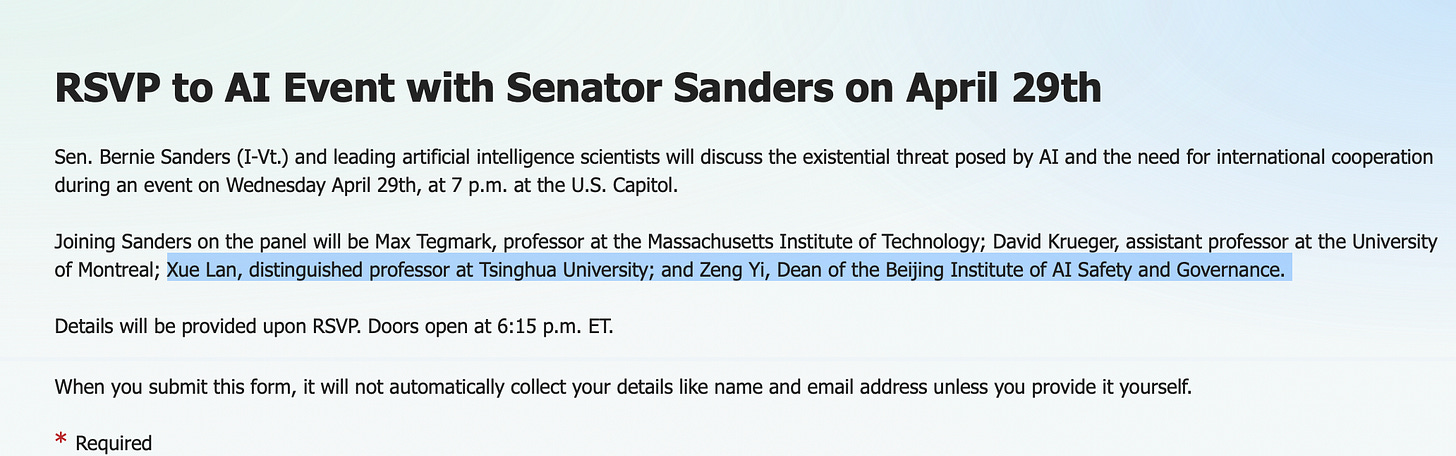

The optics of an upcoming bill-promotion event at the Capitol fit this logic perfectly. Of the four panelists Sen. Sanders has assembled to warn of AI “existential risk,” two — Zeng Yi of the Beijing Institute of AI Safety and Governance and Xue Lan of Tsinghua University — hold senior positions in the Chinese government’s AI policy apparatus. Zeng sits on the National Governance Committee of Next Generation Artificial Intelligence under Beijing’s Ministry of Science and Technology. They are joined by the Future of Life Institute’s Max Tegmark, a longtime advocate of shutting down AI indefinitely and America surrendering it’s sovereignty to a “global governance” regime. Despite being a scorched earth leftist, Tegmark’s ideas have made inroads in the state of Florida under the guise of protecting children from harm. This is the political and intellectual coalition driving the legislation and the broader movement nationwide. And they are barely hiding the real agenda.

The data center moratorium is a cudgel to set up the censorship and wrongthink regime.It is a plan to halt American AI progress and install a federal content licensing system in the wreckage. Of course, none of this is really about safety. It is a speech control proposal both in the state houses and in Washington, D.C., and one of the worst we’ve encountered since the previously attempted Biden-era covid censorship regime. So it shouldn’t be much of a surprise that the narratives and the language are such a striking resemblance.

Rest assured, the very same obedient tools who reflexively fell for and perpetuated the contrived and meaningless "Safe and Effective" shibboleth during the Plandemic will be at the ready to do it all over again.

On another level, the Left fears widespread adoption of A.I. by the masses because it threatens the Left's 5 decade-old effort to dumb down these same masses though the wholesale destruction of the education system, mass media and entertainment cannons of propaganda, along with a pathological cultural need to belong and conform to the tribe. Independent thought is heresy.

Now you have rethinking my objections to AI policy. I’d rather jump into volcano than be on the side of an issue as Crazy. Bernie and AOC how stupid.