The AI Safety Racket: How Leftist Activists Use Children to Smuggle AI Regulation into Red States

It is not a grassroots movement, but a sophisticated operation with a Republican mask.

The playbook is demonstrating a consistent pattern in states across America: find Republican officials and legislators who are skeptical of AI, frame the regulation around children, commission polling, hire local Republican credibility, and move fast before the funding sources become the story.

The network running this strategy was built with dark money, staffed by leftist ideologue operatives who had previously been connected to the Effective Altruism (EA) movement, and has been executing across red states simultaneously, with the explicit goal of creating a regulatory patchwork that entrenches these censors at the top of the AI governance framework.

Effective Altruism has always been, at its core, a power acquisition strategy dressed in utilitarian language. These forces have spent years identifying the institutions and regulatory levers that control the most consequential decisions, and systematically placing EA-aligned personnel, funding, and ideas inside them before anyone notices the ideological uniformity. The movement doesn't announce itself as a political faction. Instead, it announces itself as a moral framework, which is a far more effective entry credential into government offices, university fellowships, and political coalitions than any party affiliation could ever be.

On January 14, a organization called Encode AI cut a $10,000 check to the campaign of Doug Fiefia, a freshman Republican state representative from Herriman, Utah. Five days later, Fiefia introduced HB 286, the Artificial Intelligence Transparency Act. The timing was not a coincidence.

What followed was a textbook influence operation, bipartisan in appearance, but nonetheless, nearly a carbon copy of California-style hyper regulatory activism into one of the reddest legislatures in America. It only failed because the White House pushed back forcefully, but this dark money network continues to infiltrate statehouses across America with seemingly infinite funding, with the mission to produce AI regulatory bills that deliver power and influence back to their network.

HB 286 looked, on its face, like exactly what its sponsors said it was: a child safety measure. It would require large AI developers to publish safety plans, protect child users, and provide whistleblower protections for employees who report safety concerns. Actor Joseph Gordon-Levitt flew to Salt Lake City to testify in favor of it. Parents testified about chatbots that had groomed and psychologically damaged their children. The polling was overwhelming, and it garnered 91% Republican support, according to numbers commissioned and released by the Secure AI Project, one of HB 286’s primary backers.

Gordon-Levitt is not merely a sympathetic celebrity who wandered into a Utah hearing. He has been an active EA movement participant since at least 2017, when he spoke at EA Global in San Francisco alongside EA co-founder Will MacAskill. His wife, Tasha McCauley, has sat on the board of Effective Ventures, the organization that directly funds the Center for Effective Altruism. The actor testifying on behalf of children in Salt Lake City was, in other words, part of the same network that funded the operation that put him there.

Look closer at the bill’s language, however, and the child safety framing starts to look like mere window dressing. HB 286 went well beyond merely “protecting children,” as it explicitly targeted “frontier developers,” which are companies with over $500 million in annual revenue training AI models above a specific computational threshold. That is not a child safety statute. It is a compliance framework aimed at a narrow class of large AI companies. And it was modeled almost word-for-word on a controversial piece of legislation called California’s SB 53, which AI heavyweight Anthropic supported while simultaneously lobbying against the Trump administration’s federal preemption effort. The bill’s architecture served Anthropic’s competitive and regulatory interests. The child safety framing served Encode AI’s lobbying interests in Utah.

Encode AI is not a neutral advocacy group. It receives funding from the Future of Life Institute, which is itself funded through the Effective Altruism (EA) donor network anchored by Facebook co-founder Dustin Moskovitz, who has donated hundreds of millions of dollars to Democrats, and reportedly the single largest funder of the Biden-Harris Super PAC apparatus.

Speaking of “child safety,” Moskovitz, like many prominent EAs, has no children, according to reports. In EA circles, children are often considered a utilitarian calculation and nothing more. Does Dustin Moskovitz really love children so much that he’s spending his fortune to “protect” them from AI? Or perhaps, are the EAs and their offshoot ideologues bamboozling legislators by speaking to their values systems, while propagandizing the public to secure power because they believe that the ends justify the means?

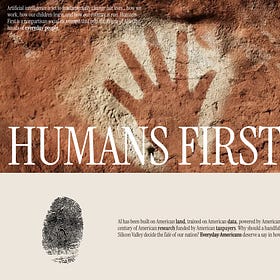

This operation did not begin in Salt Lake City. As The Dossier reported this week, the same EA-funded machine is running a parallel influence campaign at the national level through an organization called Humans First, which was incorporated by two staffers at the Center for AI Safety, an organization that has received more than $12 million from Moskovitz’s Coefficient Giving, and engineered to look like a populist uprising. Its architects recruited Joe Allen, a close associate of Jeffrey Epstein publicist Steve Bannon, as a co-founder, built a clean website, a town hall tour, and a tagline: “a nonpartisan social movement that puts the future of AI in the hands of everyday people,” with no mention of who was funding any of it. The Utah operation is the state-level version of the same playbook: different faces, different framing, identical architecture. At the national level, the target was Bannon. In Utah, it was a freshman Republican legislator and a former House Speaker. The child safety framing in HB 286 and the populist branding of Humans First serve the same function, and that is to make a Moskovitz-funded regulatory agenda look like something it isn’t.

Astroturf and EA Dollars: How AI Doomsayers Built a Fake Grassroots Movement to Infiltrate the Right

If you want to regulate what AI systems can say and do, don't send a progressive to make the case while President Trump is in power. Build a "bipartisan movement," recruit a War Room correspondent as co-founder, get Steve Bannon and Glenn Beck to sign the founding document, and let the bipartisan optics do the work.

The Secure AI Project (SAIP), which co-sponsored the lobbying effort in Utah, is even more directly connected. Its CEO and cofounder, Nick Beckstead, spent seven years at Open Philanthropy, which is Moskovitz’s primary activist vehicle. It was recently rebranded as Coefficient Giving, likely given that the group was connected to FTX Founder Sam Bankman-Fried, and it needed a fresh paint job.

To provide Republican cover, SAIP hired Greg Curtis, the politically significant former Utah Republican House Speaker, to lobby on the bill’s behalf. It also commissioned favorable polling and handed the data to Encode, which then ran a massive campaign presenting opposition to HB 286 as an “attack on children.” By the time anyone asked who was funding the operation, the bill was already through committee.

The Trump Administration, which seeks to have the federal government in charge of regulating AI policy, weighed in on the matter.

On February 12, the White House Office of Intergovernmental Affairs sent a letter to Utah Senate Majority Leader Kirk Cullimore Jr. that was blunt to the point of remarkable: “We are categorically opposed to Utah HB 286 and view it as an unfixable bill that goes against the Administration’s AI Agenda.” Behind the scenes, White House officials held multiple conversations with Fiefia directly, urging him to stand down. The legislative session closed March 6. HB 286 died without a floor vote.

The significance of that sequence deserves emphasis. The first state the Trump administration had to fight to protect its AI agenda was not California, New York, or Colorado. It was Utah, which possesses a legislature where Republicans hold a supermajority, where Donald Trump won by 20 points in 2024, and where a Moskovitz-funded network had successfully recruited a Republican freshman, hired a former Republican House Speaker, mobilized parents, and moved a California-model AI bill to the edge of passage before federal intervention stopped it.

Utah was not an isolated operation. Nebraska’s LB 1083, nearly identical to HB 286, had both Encode AI and the Secure AI Project registered to lobby. Texas’s TRAIGA was signed by Governor Abbott with sixteen Republican senators defending it against White House preemption pressure. Florida, Georgia, and Tennessee have parallel legislation in various stages. This week, Sen Marsha Blackburn (R-TN) unveiled a new AI framework that has eerily similar language to the state efforts. However, The Dossier’s sources on Capitol Hill say it has little to no chance of passing.

The White House stopped the ideologues’ AI takeover gambit in Utah, yet this is quickly turning into independent state skirmishes on dozens of fronts. How many states will the dark money network get to before the public even realizes the true motives of the individuals and groups behind these AI bills?

Republicans who aren't actually conservatives are a national problem and not just regarding AI. I don't doubt that AI is capable of causing problems for some children. So are public schools. We have to figure out how to deal with things without having "solutions" that are worse than the problem. Parental involvement with their children, while imperfect, doesn't have negative side effects and does have positive impacts beyond AI issue.

Out of an adult population of maybe 200,000,000 doubt that 10% have ever heard of AI and\or have a clue what it is or does.