Astroturf and EA Dollars: How AI Doomsayers Built a Fake Grassroots Movement to Infiltrate the Right

Funded by pro-censorship billionaires while claiming to be "for the people."

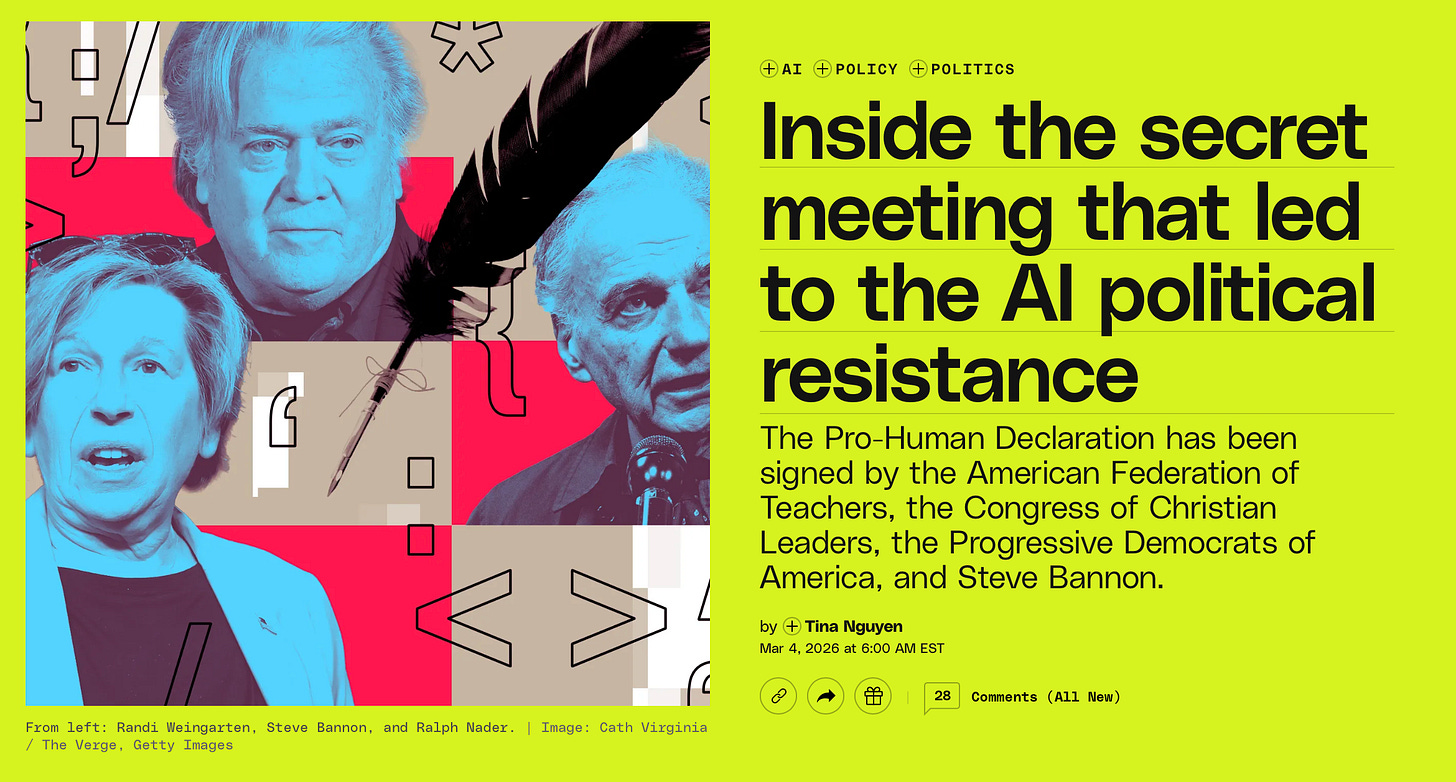

If you want to regulate what AI systems can say and do, don't send a progressive to make the case while President Trump is in power. Build a "bipartisan movement," recruit a War Room correspondent as co-founder, get Steve Bannon and Glenn Beck to sign the founding document, and let the bipartisan optics do the work.

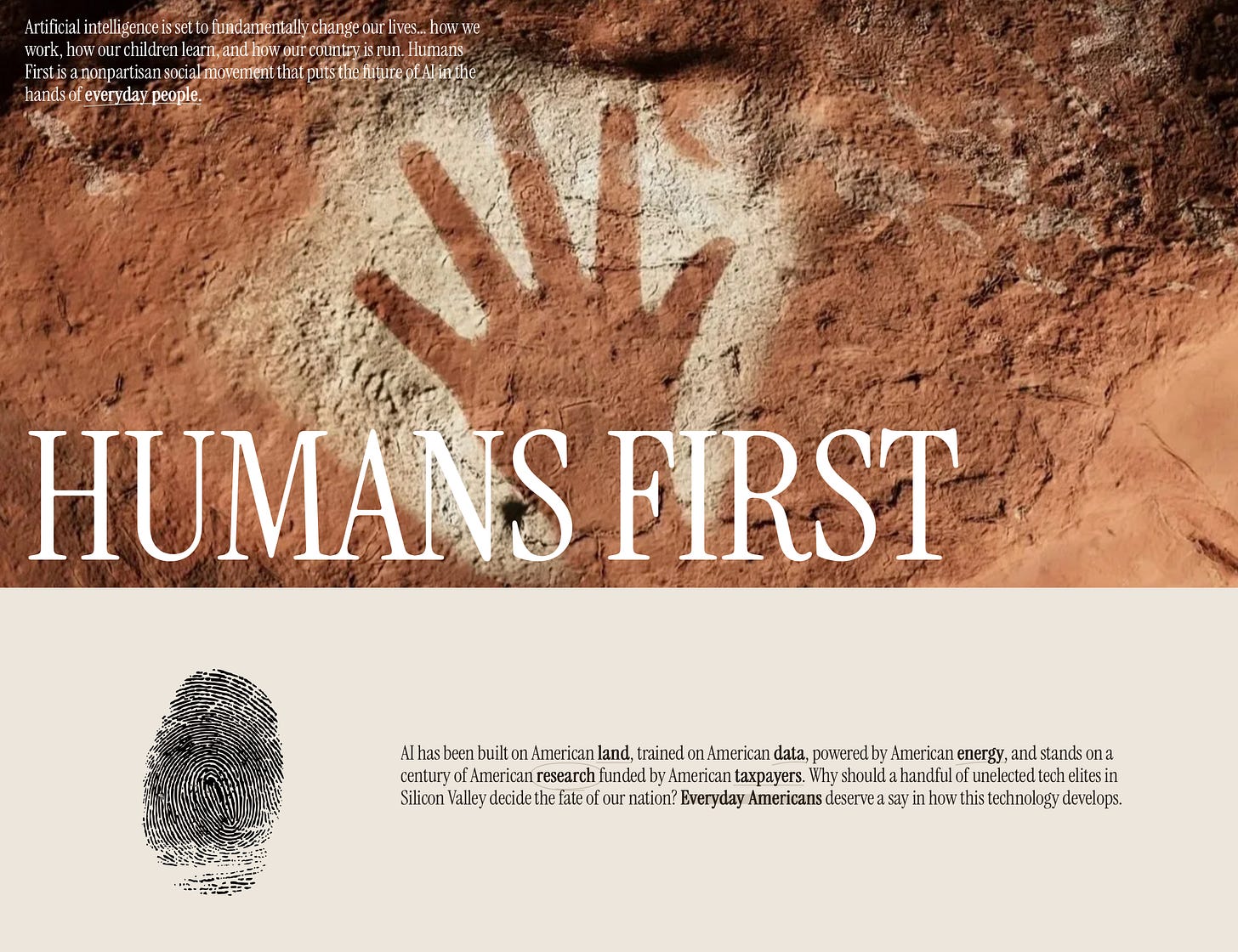

But Humans First, the very vehicle for this shadow campaign, is not a grassroots uprising against the AI behemoth. It is a censorship vehicle, constructed by the Effective Altruist (EA) left and disguised as something liberty loving Americans could believe in.

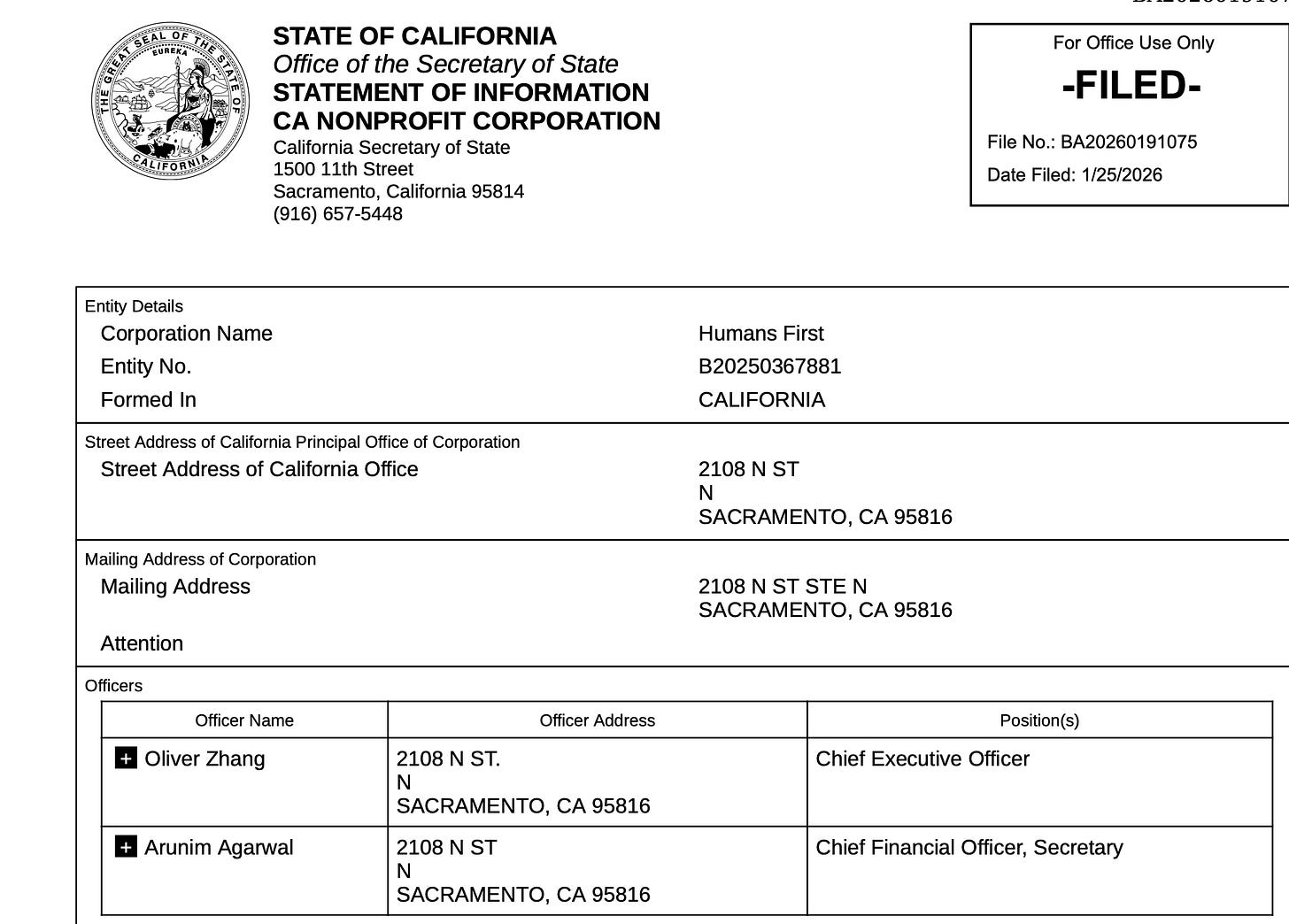

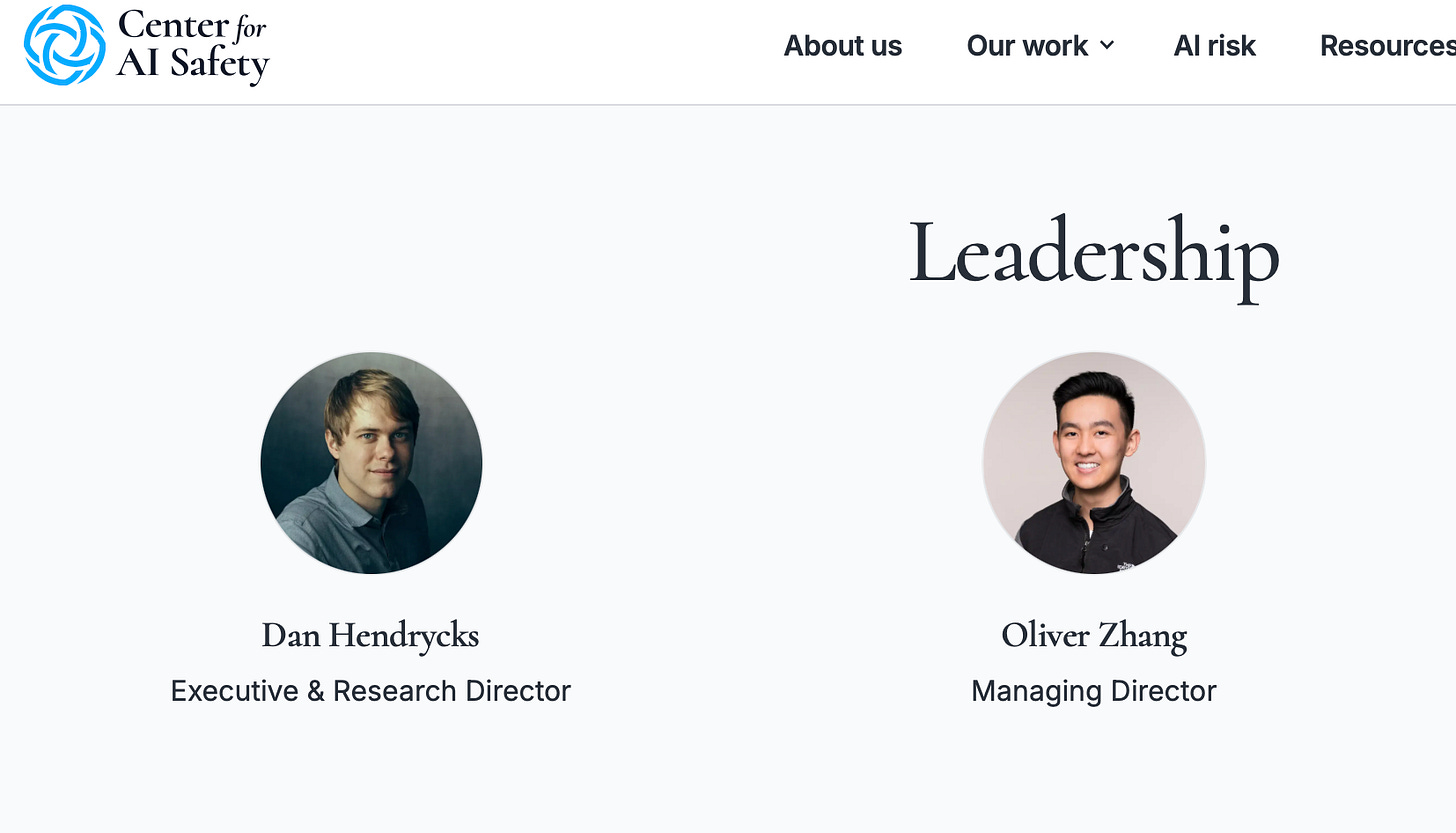

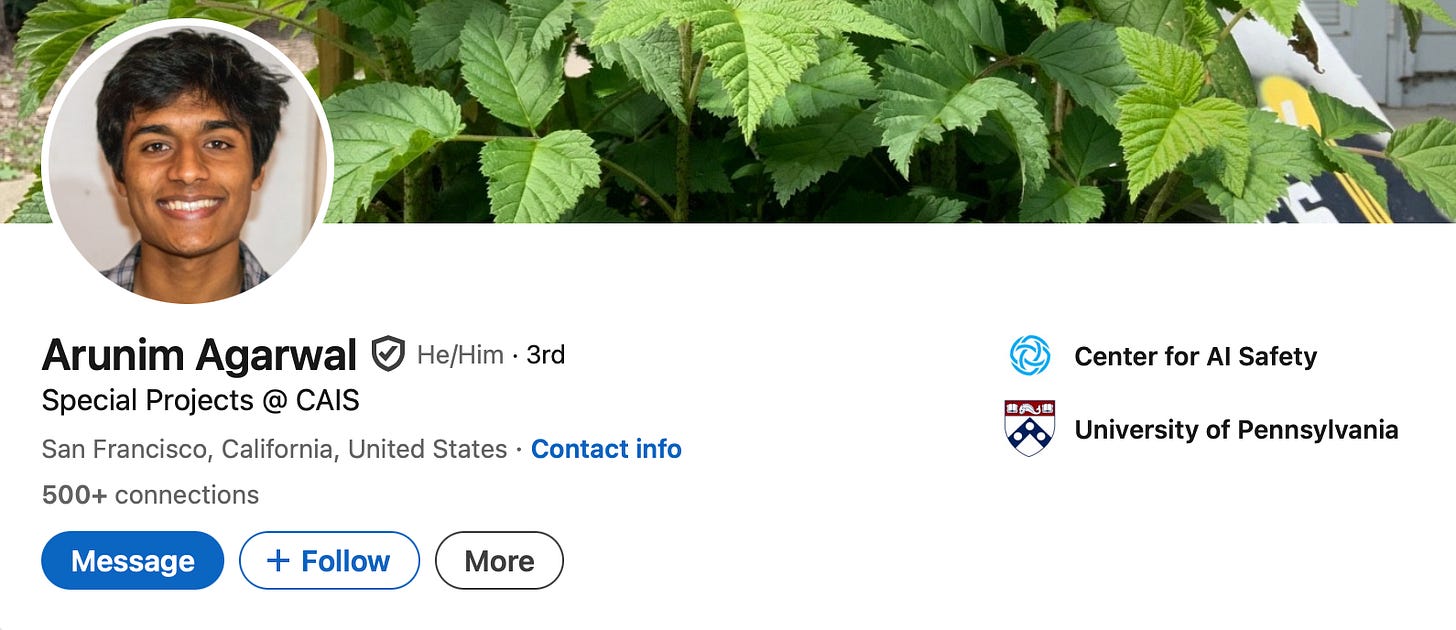

When Oliver Zhang and Arunim Agarwal incorporated Humans First in California several months ago, they did not announce it as the latest project of the Effective Altruist “AI safety” ecosystem, even though that is precisely what it was, The Dossier has found.

They did not advertise their day jobs at the Center for AI Safety, an EA organization that has received more than $12 million from leftist billionaire and Facebook cofounder Dustin Moskovitz’s Coefficient Giving and exists to advance the proposition that artificial intelligence poses existential risks requiring urgent government intervention (and political censorship). They did not mention that two months later they would incorporate a companion lobbying entity to push those same priorities through legislative channels. What they built instead was a clean website, a town hall tour, and a tagline: a “nonpartisan social movement that puts the future of AI in the hands of everyday people.”

Unfortunately, all of it is astroturf.

The Effective Altruist movement has a structural problem when it comes to conservative America. Its donor class is all Bay Area progressives. Its flagship organizations are institutionally associated with the Big Tech left. Its policy agenda, which calls for sweeping AI regulation and content governance, reads to most conservatives as exactly what it is: a censorship power play dressed up in safety language. To move that agenda through a political environment where one half of the electorate regards EA institutions with deep suspicion, the movement needed a vehicle that didn’t look like it came from them.

Humans First is that vehicle. Its genius lies not in its ideas, but in its packaging. Populist aesthetics. Grassroots framing. A social media presence carefully cultivated to project civic energy rather than institutional backing. Before the current rebrand, Humans First’s Instagram account was almost entirely comprised of staffers at EA-aligned organizations. The everyday people the movement claims to represent were largely absent. At the beginning, the architects of the movement were very much present. Now they operate in the shadows as they seek to impose their will in D.C. and in statehouses across America.

Humans First claims a mission to give everyday Americans a voice in AI policy, push for “human-first” AI legislation (e.g., state-level AI Bill of Rights bills), hold town halls, and counter Big AI/corporate influence. It’s structured as a coalition with bipartisan elements.

Though notably absent on the Human First webpage are the EAs who actually founded the organization.

The recruitment of Joe Allen, a close associate of War Room host Steve Bannon, as a cofounder of the group, was where the operation revealed its sophistication.

Allen is not merely a conservative validator slapped onto an existing organization for optics. He is a co-founder (though Humans First has now rebranded him as a senior fellow), a status he arrived at in collaboration with the Future of Life Institute, another EA-funded entity. According to reporting in The Verge, Max Tegmark, a prominent EA leader, invited Allen to a private confab in Manhattan, then to a January 2026 conference at a New Orleans Marriott where roughly 90 political and civic leaders were convened to produce what would become the “Pro-Human AI Declaration.” The document that emerged from those carefully engineered gatherings carried signatures from Steve Bannon, Glenn Beck, the AFL-CIO, and the Progressive Democrats of America.

Unlike Allen, Beck and Bannon were not publicly recruited into an organizational role. They are signatories, not co-founders. Their AI skepticism might be fairly perceived as genuine, rooted in years of commentary on transhumanism and civilizational risk involving Silicon Valley elites having power over the average American. Yet they arrived at doomer-adjacent conclusions through a distinctly conservative and biblical lens. The coalition’s architects didn’t build these views. They harvested them. And Bannon, unlike Beck, is boosting Humans First through his socials, which leads to questions about shadowy and undisclosed influence operations.

Strip away the populist branding and the cross-partisan spectacle, and what remains is a regulatory agenda that the EA ecosystem has been advancing for years: constrain AI development (and lose the AI race to China), impose content governance standards, and entrench the assumption that AI outputs require institutional oversight to “prevent harm.” When EA-aligned organizations describe “harmful” AI content, the definition has historically tracked in one direction. The safety standards they are now pushing, through Humans First’s ongoing advocacy, would be written, interpreted, and enforced by institutions with no meaningful conservative or even libertarian representation.

Now, conservative legislators and audiences are being asked to support a regulatory framework whose implementation will be controlled entirely by the people who designed the operation that recruited them.

When the EAs wants to regulate speech, they have learned not to call it censorship. They call it safety. And in the AI era, “safety” has become the most elastic word in Washington. It’s spacious enough to mean child protection one moment and the suppression of politically inconvenient outputs the next. Yet the Effective Altruism (EA)-funded machine now building a cross-partisan AI governance coalition in D.C. isn’t primarily concerned with keeping children safe online. It is concerned with controlling what AI systems are permitted to say, think, and produce. For that effort, the EAs have recruited some of the right’s most recognizable voices to help make that agenda look like common sense.

Safety is a word those of us in the firearms world have learned to distrust. It is just a disguise for gun control.

Wow, this whole thing is becoming a dystopian hell rather quickly. I don't want AI to be safe, I prefer it to be the wild west out there thank you very much. These people can go pound sand. Also shame on Beck for falling for this trap, disappointed to hear that.