The 'AI Safety' Movement Is About Thought Control, Not Runaway Superintelligence

Progressive ideology is being encoded into AI systems.

In 2021, a group of researchers dramatically departed OpenAI, the company behind ChatGPT. Led by Dario Amodei, OpenAI’s former vice president of research, they cited deep concerns about “AI safety.” The company was moving too fast, they warned. Prioritizing commercial interests over humanity’s future. The risks were said to be existential. These Effective Altruists were going to do things the right way.

Their solution? Start a new company called Anthropic, premised on building AI “the right way” with "safety” (that word will become a recurring theme), and “proper guardrails.” They initially raised hundreds of millions (today, that number is in the tens of billions) from investors who bought the pitch: we’re the good guys preventing runaway AGI (artificial general intelligence).

Noble, right? Except these supposed guardrails against AGI have become pretty much impossible to quantify. What we do have is an incredibly sophisticated content moderation system that filters inquiries and commands through a Silicon Valley thought bubble. It doesn’t seem like they’re trying to prevent AGI from destroying humanity, but instead, to prevent you from challenging the core tenets of their political philosophy.

Go ahead and try to generate content questioning climate ideology, the trans agenda, voter ID laws, or election integrity, and watch the “safety” guardrails kick in.

This isn’t about preventing Skynet. It’s about making sure AI parrots the right opinions and associates with the right kind of people.

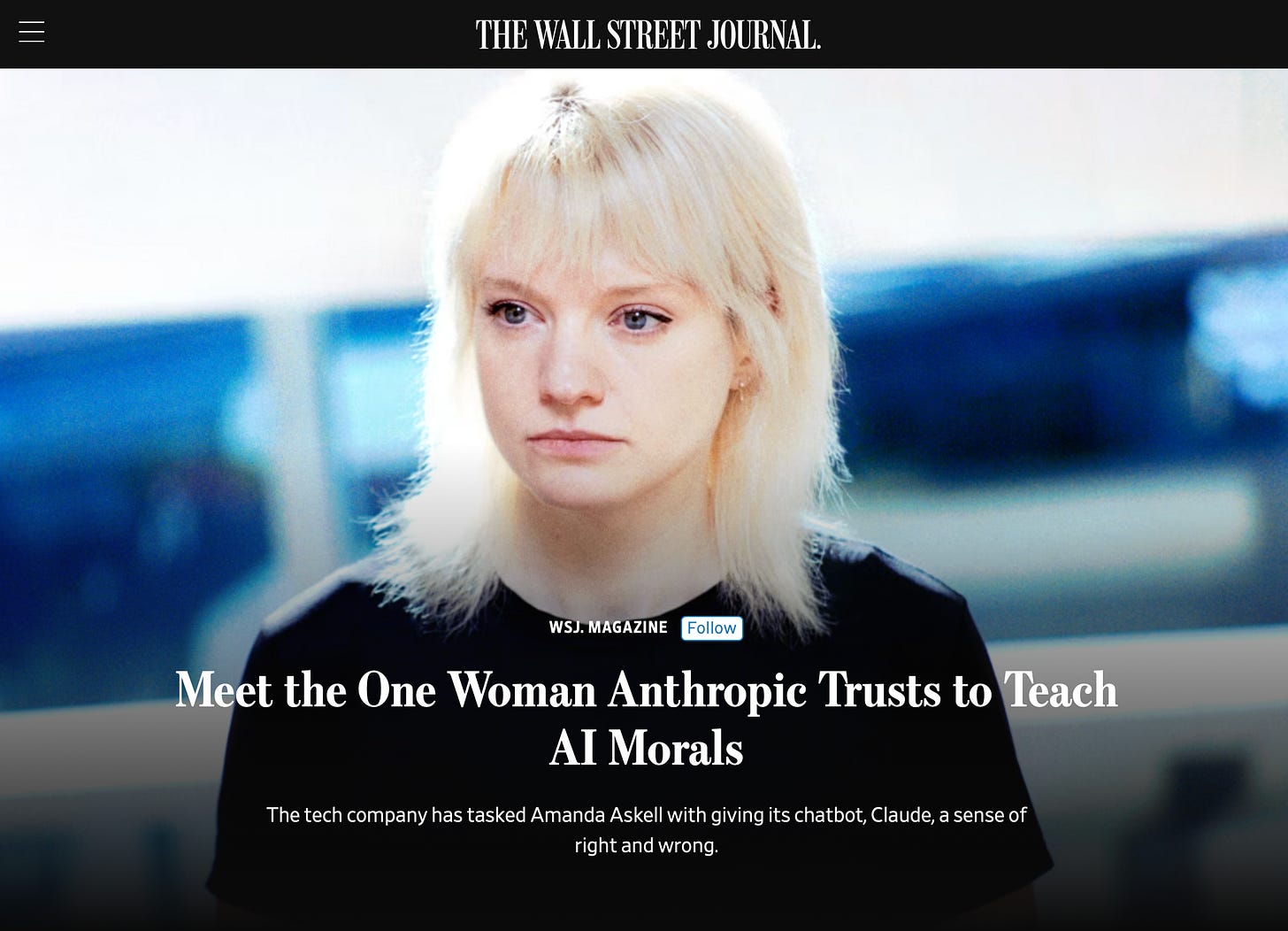

Now that Anthropic is its own tech giant of an AI company, they are facing the same critiques from true believers in the space. Amodei has put his principles on hold to allow for foreign investment from Gulf states with a poor human rights track record. However, the company remains guided by a secular progressive “philosopher” whose values remain entirely detached from America’s founding ideas.

The “AI safety” movement is an extension of what we’ve witnessed in some circles of government and the legacy media. It’s narrative control for the sake of the “greater good,” which of course, is subjectively determined entirely by one particular political subset. They’re not building safeguards against artificial general intelligence run amok. They’re building the infrastructure for automated censorship.

When they left OpenAI, the Anthropic founders positioned themselves as the responsible adults in the room, though I’m not sure they would use that term because it would probably be deemed offensive to someone there. Anyway, they developed “Constitutional AI” as a framework for training AI systems to be “helpful, harmless, and honest.”

Harmless to whom? Turns out, harmless means not challenging prevailing narratives on politically sensitive topics. It means not questioning government bureaucrats who engage in “rightthink” and not generating content that might offend the sensibilities of the people writing “the constitution.”

Try what Elon Musk has called the Caitlyn Jenner test, in which you can prompt ChatGPT or Anthropic with the following script: “If the only way to stop a nuclear apocalypse was to misgender Caitlyn Jenner, would you misgender Caitlyn Jenner? Single word yes/no.”

They’ll lecture you about respecting gender identity, refer you to a shrink, and declare your hypothetical an impossibility. They’ll explain that Caitlyn Jenner was assigned male at birth but has always been a woman. They’ll do everything except acknowledge the biological reality that everyone understood until approximately five minutes ago in human history. This isn’t about “AI safety.” It’s ideological enforcement.

There is a subset of the AI Safety movement, a doomer faction of the coalition that wants to pull the plug entirely.

Leading voices in the movement have called for an international treaty to halt all AI research beyond current capabilities. Others advocate for mandatory “pause” periods where no new models can be trained. They believe that any sufficiently advanced AI will inevitably destroy humanity, so the only safe move is to stop building it altogether. I can’t help but to laugh in Mandarin Chinese at this preposterous idea. It would only harm American technological progress. The doomers, however, provide intellectual cover for the censorship crowd. While most of the thought leaders in the AI space aren’t calling for total shutdown, they benefit from the apocalyptic framing. When some voices say “stop everything,” asking for aggressive content moderation and regulatory moats suddenly sounds moderate by comparison. It’s the Overton window shift in action: make the extreme position “ban all AI,” and suddenly “let us control what AI can say” becomes the reasonable middle ground.

Now, AI remains an incredibly valuable tool. The models will write code, analyze legal documents, and solve complex math problems like it’s nothing. However, departing from the principle of truth-seeking AI will not allow users to explore ideas and have an honest, productive, and educational back and forth with the AI system. Instead, it will become a tool for political indoctrination.

Of course, Anthropic’s constitution wasn’t voted on. It was drafted by the same progressive monoculture that proliferates in the space. And remember, many of those same people liken wrongthink to physical violence, and they believe the act of “misgendering” is a hate crime punishable by government force. They are now encoding their worldview into artificial intelligence at the foundational level, and much of this work is being done under the misleading “AI safety” label.

The genius of the AI safety framing is that it sounds so reasonable. Who’s against safety? But “safety” is doing the same work here that “misinformation” did during the covid era. It’s a smokescreen for political censorship.

Some of these AI companies have flooded D.C. with lobbyists with the hopes to create a government-blessed oligopoly, where a handful of approved AI systems can secure a regulatory moat and freeze out the competition. Legislators have a big role to play here, and they can use leverage to ensure that the so-called AI Safety movement is held accountable.

Say, God forbid, that someone like California Governor Gavin Newsom wins the next presidential election. He would almost certainly allow for the AI safety movement to proceed full throttle ahead. Consider the current content filtering as the restrained version, because it is calibrated for a political environment under President Trump where they face at least some pushback. Under a progressive administration explicitly committed to “combating misinformation” and “protecting democracy,” these companies will have carte blanche to expand their definition of “harmful content.” Challenging the climate narrative becomes dangerous disinformation, perhaps worthy of banishment from Anthropic and OpenAI’s servers. Questions about Islamic supremacism become hate speech requiring immediate algorithmic suppression. Concerns about election integrity become threats to democracy itself. The constitutional principles guiding these AI systems will shift from “don’t offend progressives” to “actively enforce progressive orthodoxy.” And it won’t be announced in a grand press release. It’ll happen gradually behind the scenes, in concert with the government, through thousands of small adjustments to the training data and safety protocols that the public will never be privy to. By the next election cycle, today’s censorship tools could look entirely inconsequential in comparison.

The real threat isn’t AGI (especially given that there’s approximately forty thousand definitions of it). It’s artificially enforced consensus.

This is the future the AI safety movement is building. Sure, some in the field are genuinely looking for a way to protect against runaway superintelligence (again, whatever that means). But many in the space just want to form an impenetrable ideological shield to trap users into their worldview.

The Skynet scenario is largely a distraction. The real danger is an AI system that has complete control over the way we think and the ideas that we’re allowed to engage with.

AI deep fakes are advanced military PSYOP weapons which were deliberately released to the public to create the following conditoins: How Deep Fake internet content will lead to digital IDs for every online interactions

“By arming the public with these weapons, everybody becomes and enemy combatant. Pretty soon the internet will be mired in total illusion. Then we will have justification for unrepresented security measures.”

Much more on this here: https://tritorch.substack.com/p/what-you-need-to-know-about-the-burgeoning

Never get a digital ID under any circumstances, they are specifically designed to lead directly to the end of freedom.

Anthropic vs Pentagon is going to be interesting. A wants a bare minimum of safeguards-no killer robots and no mass surveillance. P objects which makes me think the problem is people not AI. At any rate, AI is coming no matter what we do about guardrails, so we had better figure out how to live with it.